Understanding how users interact with your website is no longer a luxury—it is a necessity. Businesses compete not only on product and price, but also on the quality of the user experience they provide. Heatmap A/B testing tools have become essential for identifying friction points, validating design decisions, and continuously improving digital experiences. By combining behavioral visualization with experimental testing, these tools empower teams to turn guesses into data-driven strategies.

TLDR: Heatmap A/B testing tools combine visual behavior tracking with controlled experiments to optimize user experience. Heatmaps reveal where users click, scroll, and hover, while A/B testing compares different design variations to determine what works best. Together, they provide actionable insights that reduce guesswork and improve conversions. Businesses that use both methods effectively can boost engagement, usability, and revenue.

What Are Heatmaps and Why Do They Matter?

A heatmap is a visual representation of user interaction data displayed using color gradients. Warmer colors like red and orange typically indicate high engagement, while cooler colors like blue and green signify lower interaction. Instead of reading rows of analytics reports, you can instantly see where attention is concentrated.

- Click heatmaps: Show where users click most frequently.

- Scroll heatmaps: Reveal how far down a page users typically scroll.

- Move or hover heatmaps: Track where users move their mouse.

- Attention heatmaps: Estimate areas that draw the most focus.

By identifying hotspots and neglected sections, designers and marketers gain immediate insight into what captures interest and what gets ignored. For example, a call-to-action button placed in a “cold” zone may explain lower-than-expected conversion rates.

The Role of A/B Testing in Optimization

While heatmaps show what users are doing, A/B testing helps determine which variation performs better. In an A/B test, two versions of a webpage (Version A and Version B) are shown to different segments of users. Performance metrics—such as click-through rates, form submissions, or purchases—are measured and compared.

This scientific method eliminates guesswork. Rather than debating whether a red or green button works better, teams rely on statistically significant data to decide.

Common elements tested include:

- Headlines and messaging

- Call-to-action text and placement

- Page layouts

- Images and videos

- Pricing displays

- Forms and checkout flows

However, A/B testing alone does not explain why a variation wins. That is where heatmaps add essential context.

Why Combining Heatmaps and A/B Testing Is So Powerful

When used together, heatmaps and A/B testing provide both qualitative and quantitative insights. A/B testing tells you which version wins. Heatmaps show you how users interact with each version.

For example, imagine you test two landing page layouts. Version B outperforms Version A by 15%. Heatmap data might reveal that in Version B:

- More users scroll past the hero section.

- Attention clusters around testimonials.

- The call-to-action receives significantly more clicks.

This layered understanding improves not only the current test but also future design strategies.

Key Features to Look For in Heatmap A/B Testing Tools

Not all optimization tools are created equal. When evaluating solutions, consider these important features:

1. Real-Time Data Collection

Access to real-time or near-real-time data allows faster iteration cycles and quicker decision-making.

2. Segmentation Capabilities

Segment data by device type, traffic source, geography, or user behavior. Mobile users may interact very differently from desktop visitors.

3. Session Recordings

Session replays help you see the complete user journey, exposing friction points that heatmaps alone might not reveal.

4. Statistical Confidence Indicators

Strong A/B testing tools provide clear indicators of statistical significance so you can confidently implement winning variations.

5. Integration with Analytics Platforms

Smooth integration with existing analytics and marketing tools ensures seamless workflow and broader data analysis.

Practical Use Cases for Heatmap A/B Testing

Let’s explore how businesses use these tools in real-world scenarios.

Landing Page Optimization

Heatmaps might reveal that users ignore key messaging placed below the fold. An A/B test could reposition that content higher on the page to evaluate impact on conversion rates.

Checkout Flow Improvements

Scroll maps and click maps often identify drop-off points in multi-step checkouts. Testing simplified forms or clearer progress indicators can significantly reduce cart abandonment.

Content Engagement

Blog publishers use heatmap insights to identify which sections hold readers’ attention. A/B tests can then optimize headlines or featured images to increase time on page.

Mobile Experience Refinement

Mobile users have different behaviors due to screen size and touch interactions. Heatmap tools highlight tap concentration areas, while A/B tests refine layout adjustments tailored specifically to mobile audiences.

Common Mistakes to Avoid

Despite their effectiveness, heatmap A/B testing tools are often misused. Avoid these pitfalls:

- Running tests without enough traffic: Insufficient sample size produces unreliable results.

- Testing too many variables at once: Isolate one key element for more accurate conclusions.

- Ignoring qualitative context: Numbers alone do not tell the full story—observe behavior patterns.

- Ending tests too early: Wait for statistical significance rather than acting on early trends.

- Failing to form a hypothesis: Always begin with a clear, measurable objective.

Optimization is a continuous process, not a one-time project. Avoid the temptation to treat it as a quick fix.

Data-Driven Hypothesis Building

Successful experimentation begins with structured thinking. A strong hypothesis often follows this format:

If we change [element], then [expected improvement] will occur because [reason based on data].

For instance:

If we move the primary CTA above the fold, conversions will increase because heatmap data shows limited scrolling beyond the hero section.

This approach aligns insights from heatmaps with testable predictions in A/B experiments.

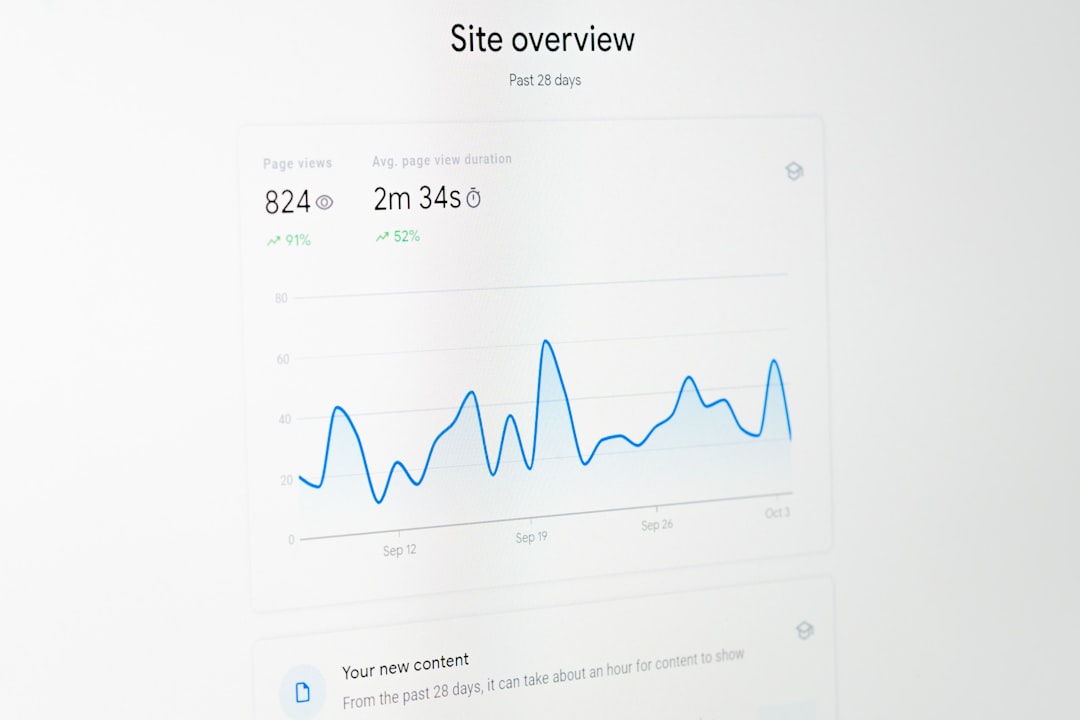

Measuring Success Beyond Conversions

While conversions are a primary metric, they are not the only indicator of user experience improvement. Consider tracking:

- Engagement rate

- Time on page

- Bounce rate

- Customer satisfaction scores

- Net Promoter Score (NPS)

- Task completion rates

Optimizing user experience is about reducing friction and improving clarity, not just increasing immediate sales.

The Psychological Dimension of Heatmaps

Heatmaps reveal behavioral patterns influenced by cognitive psychology. Users follow predictable visual hierarchies such as the F-pattern in text-heavy pages. They respond to contrast, whitespace, and directional cues. Understanding these principles helps teams design experiments grounded in human behavior.

For example, a brightly colored CTA may attract clicks—but if it clashes with the overall aesthetic, it could reduce trust. Heatmap data combined with thoughtful A/B testing validates whether psychological assumptions hold true in practice.

Continuous Optimization Culture

Organizations that excel at user experience treat optimization as an ongoing discipline. They create structured experimentation roadmaps and prioritize test ideas based on expected impact and effort.

A sustainable optimization workflow often includes:

- Research: Analyze analytics, heatmaps, and user feedback.

- Hypothesis creation: Develop clear testable ideas.

- Experiment execution: Run controlled A/B tests.

- Analysis: Validate statistical significance.

- Iteration: Implement winning variations and repeat.

This cycle ensures continuous growth rather than isolated improvements.

The Future of Heatmap A/B Testing Tools

With advancements in artificial intelligence and machine learning, modern tools increasingly automate insight generation. Instead of manually scanning heatmaps, algorithms highlight anomalies and recommend potential experiments.

Predictive experimentation is also emerging, where platforms estimate the probability of success before launching full-scale tests. As personalization becomes more sophisticated, real-time adaptation based on user behavior could redefine the traditional A/B testing model.

Yet even as technology advances, the core principle remains the same: observe behavior, test thoughtfully, and optimize continuously.

Final Thoughts

Heatmap A/B testing tools bridge the gap between observation and action. Heatmaps provide intuitive visual insights into user behavior, while A/B tests validate strategies with measurable evidence. Together, they form a powerful framework for improving digital experiences.

In a competitive digital landscape, small optimizations compound over time. A slight increase in click-through rate, a reduction in checkout friction, or better content engagement can significantly impact overall performance. By embracing a culture of experimentation supported by heatmaps and controlled testing, businesses can create experiences that are not only functional—but truly user-centered.